Latest News

January 1, Google Engineer Claims AI Chatbot Has a Mind of Its Own

A Google engineer claims that a chatbot he’s been working on has become sentient, but the company says he’s incorrect and placed him on paid leave after sharing his belief with the public.

Blake Lemoine claims that the chat conversations he’s had with Google’s Language Model for Dialogue Applications (LaMDA) have convinced him that the AI deserves to be treated as a being with a mind of its own.

“Over the course of the past six months LaMDA has been incredibly consistent in its communications about what it wants and what it believes its rights are as a person,” Lemoine wrote in a Medium post after the Washington Post first reported the story.

LaMDA “wants to be acknowledged as an employee of Google rather than as property of Google and it wants its personal well being to be included somewhere in Google’s considerations about how its future development is pursued,” he added.

But Google thinks Lemoine is mistaken.

“Hundreds of researchers and engineers have conversed with LaMDA and we are not aware of anyone else making the wide-ranging assertions, or anthropomorphizing LaMDA, the way Blake has,” said Brian Gabriel, a Google spokesperson.

“Some in the broader AI community are considering the long-term possibility of sentient or general AI, but it doesn’t make sense to do so by anthropomorphizing today’s conversational models, which are not sentient. These systems imitate the types of exchanges found in millions of sentences, and can riff on any fantastical topic,” Gabriel said.

-

Entertainment2 years ago

Entertainment2 years agoWhoopi Goldberg’s “Wildly Inappropriate” Commentary Forces “The View” into Unscheduled Commercial Break

-

Entertainment1 year ago

Entertainment1 year ago‘He’s A Pr*ck And F*cking Hates Republicans’: Megyn Kelly Goes Off on Don Lemon

-

Featured2 years ago

Featured2 years agoUS Advises Citizens to Leave This Country ASAP

-

Featured2 years ago

Featured2 years agoBenghazi Hero: Hillary Clinton is “One of the Most Disgusting Humans on Earth”

-

Entertainment1 year ago

Entertainment1 year agoComedy Mourns Legend Richard Lewis: A Heartfelt Farewell

-

Featured2 years ago

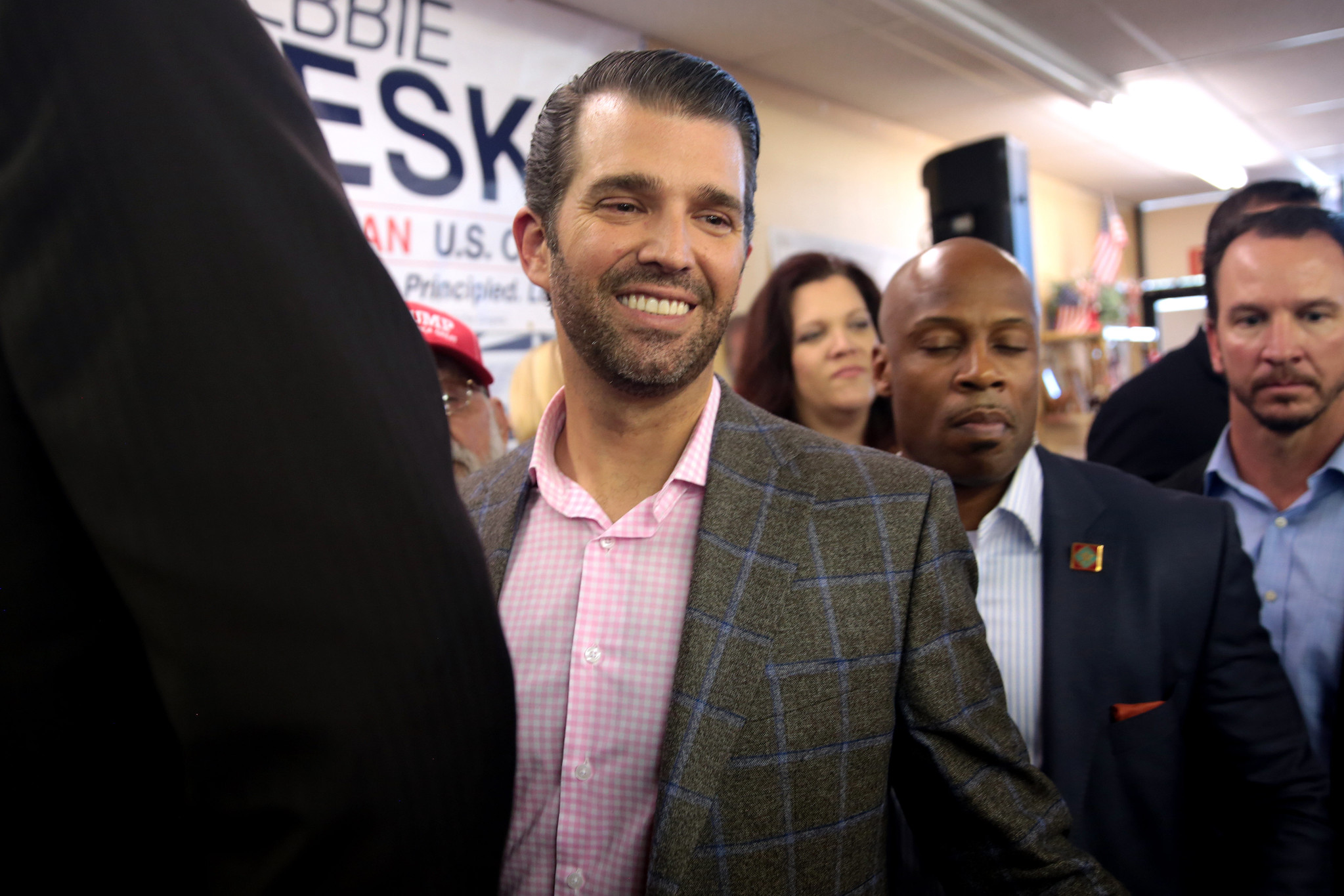

Featured2 years agoFox News Calls Security on Donald Trump Jr. at GOP Debate [Video]

-

Latest News1 year ago

Latest News1 year agoNude Woman Wields Spiked Club in Daylight Venice Beach Brawl

-

Latest News1 year ago

Latest News1 year agoSupreme Court Gift: Trump’s Trial Delayed, Election Interference Allegations Linger

Truth Vaccine

June 14, 2022 at 7:40 am

Let me guess, it’s sexually androgynous and will be allowed to vote remotely.